Modern neural networks generate realistic artificial images and audio. This development will allow us to create movies, music and audio effects never seen before. Yet at the same time, the new technology may enable new digital ways to lie.

In response, the need for a diverse and reliable toolbox arises to identify artificial images and other content. This short blog post aims to summarize the main points regarding the use of the wavelet packet transform to identify artificially generated deepfake images. The key observation is that wavelet packet coefficients are distributed differently for real and fake images.

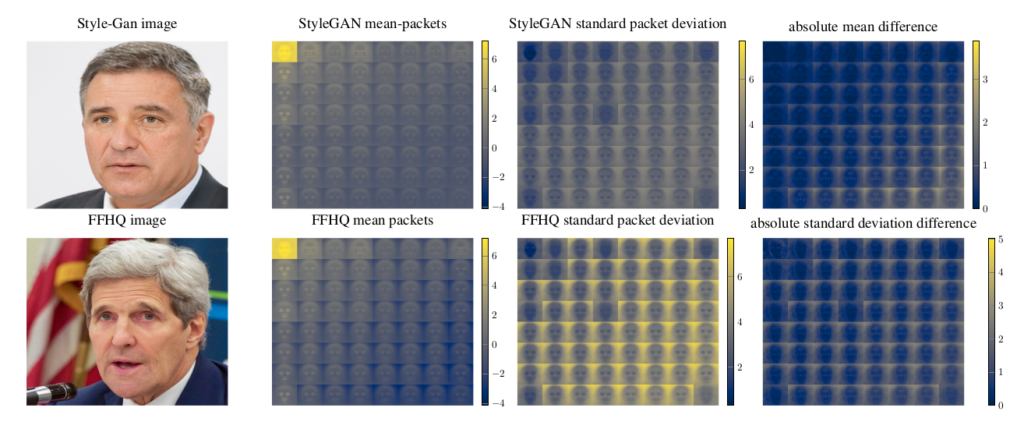

The image above illustrates this. The leftmost column shows a single real image from the Flickr-Faces-HQ data set as well as an artificially generated image for reference. To study the feasibility of wavelet packets for deepfake detection third-degree Haar-Wavelet packet coefficients are computed for 5k real and fake images using the PyTorch-Wavelet-Toolbox. Comparing the mean coefficients in the center as well as their standard distribution, we notice differences especially as the frequency increases along the diagonal. The standard deviation is significantly different in the background parts of the images across the board. The differences suggest a possibility to separate real from fake based on the wavelet packet coefficients.

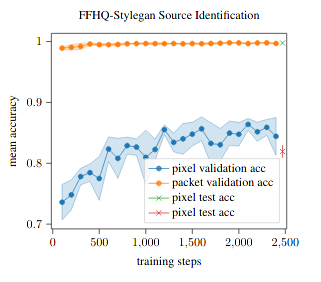

A first experiment explores the separability of images from the Flicker-Faces-HQ dataset as well as style-gan generated images. Working with 63k 128 by 128 images from each source the task is to identify the origin of an image.

The plot above shows the convergence of a classifier trained to identify the source of an image. The wavelet packets allow the classifier to converge faster with performance improvements during all stages of the training.

If you would like to find out more the source code as well as a preprint are now freely available online.